A creative director at a mid-sized agency recently described a familiar scene: it is 1:00 AM, a client presentation is scheduled for 9:00 AM, and the generative media team is stuck. They have a prompt that produces “almost” the right visual—a sleek, futuristic laboratory with a specific color palette—but in every single variation, a glass beaker is fused into a lab technician’s hand, or the brand’s logo on the wall looks like a collection of alien runes.

The team has already spent three hours hitting the “regenerate” button. They have burned through thousands of compute credits and, more importantly, dozens of expensive man-hours. This is the “randomness tax” of generative AI. When a workflow relies solely on global prompting, you are essentially gambling that a stochastic process will eventually stumble upon perfection. For professionals, hope is not a viable production strategy.

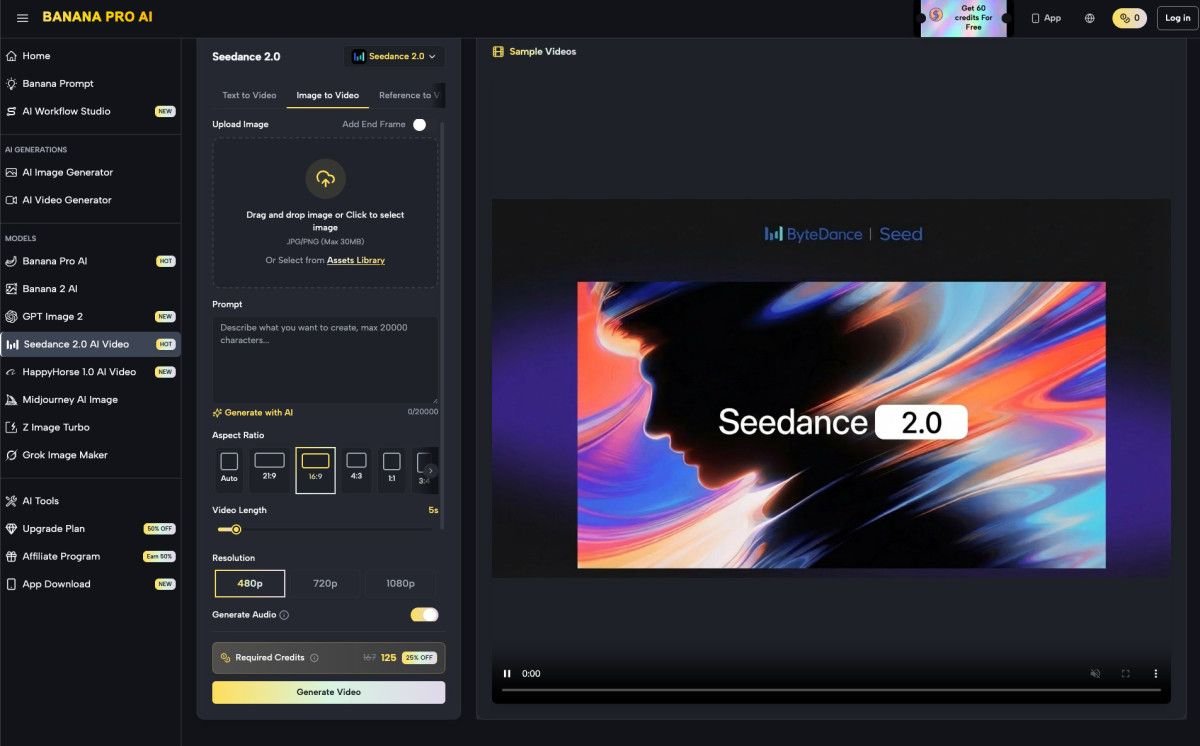

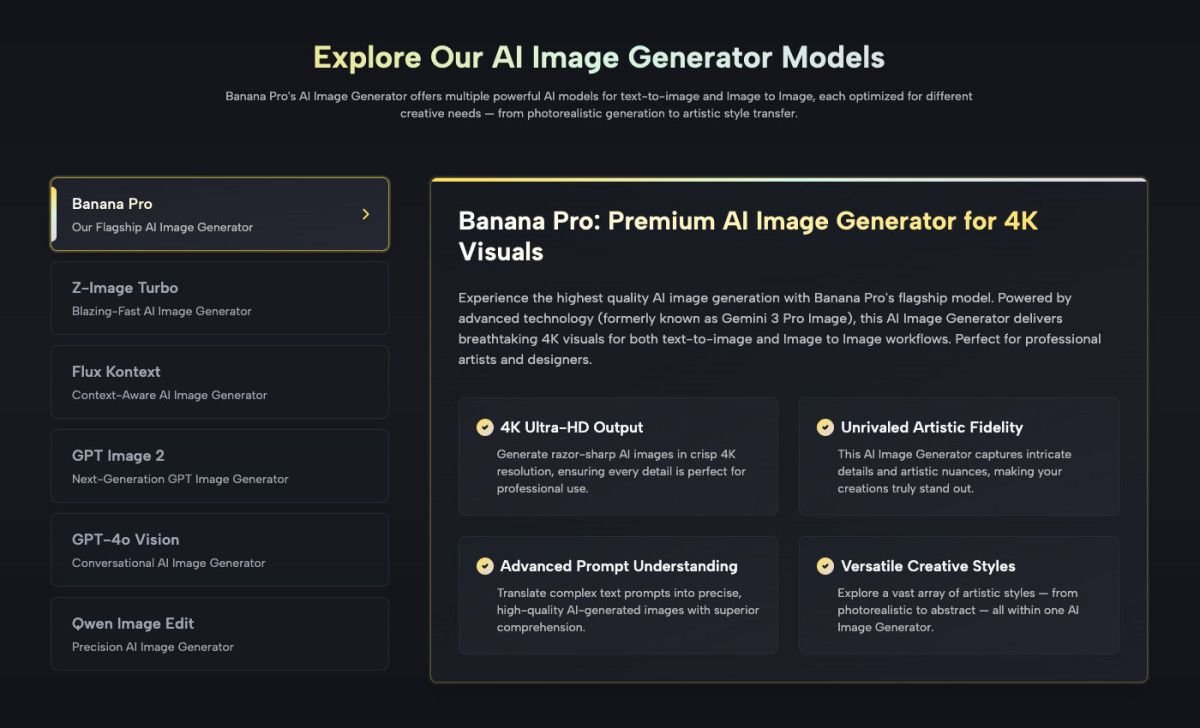

To bridge the gap between “interesting AI experiment” and “deliverable asset,” the workflow must shift from prompt-dependence to architectural control. This requires a move toward localized editing, regional inpainting, and canvas-based manipulation. By treating the initial generation as a raw material rather than a final product, studios can use tools like Nano Banana Pro to finalize assets with surgical precision, slashing the time lost to the cycle of endless rerolls.

The Efficiency Wall in Prompt-First Production

The initial allure of generative AI was the promise of the “one-shot” miracle. You type a sentence, and a masterpiece appears. While this works for social media avatars or conceptual mood boards, it fails the moment a client provides a specific feedback note, such as “make the lighting on the subject’s face warmer, but don’t change the background.”

If you try to achieve this via a global prompt change—adding “warm lighting on face” to the existing string—the entire image will likely change. The subject’s clothes might shift from blue to red, the background architecture might mutate, and the composition might flip. You have fixed the lighting but broken everything else.

In a professional environment, “generative luck” is the enemy of a predictable pipeline. The cost of a project is not just the subscription fee for the software; it is the time spent by a designer evaluating and discarding “almost right” images. When an editor can pinpoint a specific region and modify only those pixels, the ROI of the tool increases exponentially. This transition from “prompting” to “editing” marks the difference between a toy and a tool.

Architectural Control via Regional Inpainting

Regional inpainting allows a creator to define a mask—a specific area of the image—and tell the AI to re-imagine only that section. In the context of Nano Banana, this means you can keep the 90% of the image that works and focus your creative energy on the 10% that doesn’t.

Consider a video production pipeline where you need a series of consistent character portraits for a storyboard. Using a global prompt for each frame often results in “character drift,” where the subject’s facial features or clothing change subtly between images. A more effective workflow involves generating a strong base image and then using regional changes to swap out expressions or hand gestures while keeping the rest of the frame static.

This “segment-first” approach is particularly valuable for maintaining brand continuity. If a product shot requires a specific beverage can to be placed on a wooden table, the AI might get the table right but hallucinate the can’s label. By locking the table and using localized editing on the can itself, the designer maintains control over the composition’s structural integrity. However, it is important to note a current limitation: regional inpainting is not a perfect eraser. If the underlying geometry of an image is fundamentally flawed—such as a person with three arms—simply masking one arm and asking for “background” can sometimes leave behind ghosting artifacts or weird “edge bleeding” where the AI struggles to blend the new pixels with the old.

Integrating Canvas Workflows into the Post-Production Stack

For video editors and designers, a generative tool should not be an island. It needs to function as a bridge to the rest of the production stack. The Banana AI canvas workflow operates as a pre-visualization and refinement space that sits between the initial idea and the final composite in software like After Effects or Photoshop.

When working within an AI Image Editor, the canvas allows for out-painting (expanding the borders of an image) and in-painting simultaneously. This is crucial for video editors who might need to convert a vertical 9:16 mobile shot into a horizontal 16:9 cinematic frame. Rather than just stretching the pixels or using a blur effect, the canvas allows the editor to generate an extension of the environment that matches the existing lighting and texture.

Once the static asset is refined using Banana Pro, it can be moved into a video generation loop. This sequence—Refine Image -> Upscale -> Animate—is far more reliable than attempting to generate a high-fidelity video from a text prompt alone. By ensuring the “source of truth” (the static image) is perfect before it ever hits the video engine, you prevent the AI from magnifying small errors into glaring motion artifacts.

What Generative Editing Cannot Fix: The Hard Limits

Despite the advancements in Nano Banana Pro, it is vital for creative leads to understand where the technology currently plateaus. We are not yet at the stage of “total control,” and pretending otherwise leads to missed deadlines.

One significant limitation is the “cascading error” problem. If you attempt to inpaint a complex object into a scene where the perspective is already slightly off, the AI will often double down on that incorrect perspective. It lacks a true 3D understanding of the space; it is essentially guessing based on 2D patterns. If your base image has a “fisheye” distortion, the regional edit will likely inherit or exacerbate that distortion in a way that looks “uncanny” to the human eye.

Furthermore, lighting consistency remains a manual oversight task. While the AI is generally good at matching the colors of a mask to its surroundings, it often misses the directionality of light. If you inpaint a cat onto a sunny porch, the AI might give the cat the right fur color but fail to place the shadow in the same direction as the porch railing’s shadow. In these instances, it is often faster to revert to traditional Photoshop cloning or manual grading than to spend twenty minutes trying to “prompt” the shadow into existence. Acknowledging these friction points allows a team to decide when to use AI and when to stick with traditional digital artistry.

The Buyer’s Perspective: Calculating the Workflow ROI

From a business standpoint, the goal is to reduce the “cost per successful asset.” In the early days of generative media, companies focused on the novelty of the output. Now, they focus on the predictability of the output.

By utilizing the localized editing features of Nano Banana Pro, a studio can significantly reduce its compute overhead. Generating a small 512×512 patch to fix a specific hand or eye is computationally “cheaper” (in terms of both time and server load) than regenerating a full 2K or 4K frame. When multiplied across a project with hundreds of assets, these savings are non-trivial.

The real ROI, however, lies in the turnaround time. Client revisions are the most expensive part of the creative process. If a client says, “I love this, but can the car be red instead of blue?” and the designer can solve that in three minutes via a regional mask rather than three hours of “re-prompting,” the profit margin on that project increases.

Building a production pipeline around Banana Pro and its suite of editing tools allows a studio to promise—and deliver—consistency. It moves the conversation away from “let’s see what the AI gives us” toward “let’s use the AI to build exactly what we need.” In the high-stakes world of professional media production, that shift from randomness to control is the only way to remain competitive. Professionalism in the age of AI isn’t about who can write the best prompt; it’s about who can best direct the output toward a specific, uncompromised vision.